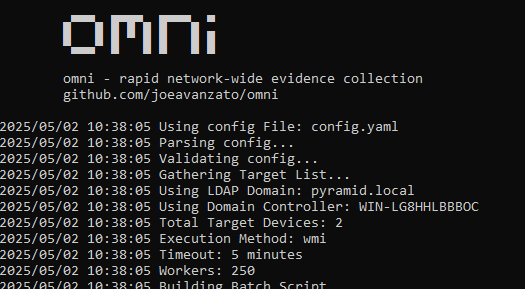

While doing some pivoting on emerging Command and Control servers, I identified an open-directory on an IP Address (144.31.106[.]169) hosting what appeared to be a collection of malicious shell scripts, as shown below.

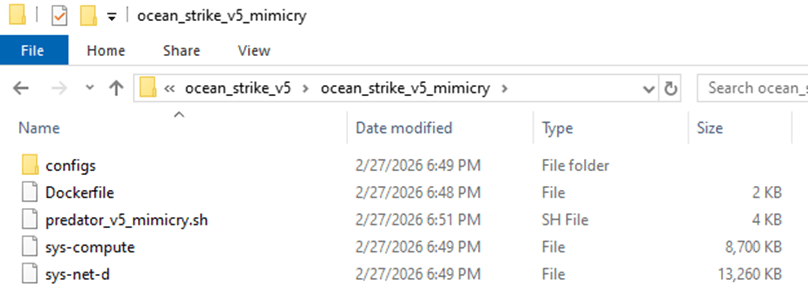

Peering into the first shell script, ‘mimicry.sh’, its purpose appears to be serving as a staging script for the actual payload – ocean_strike_v5.tar.gz – curling the payload into /tmp/.sys-task, extracting it, starting a secondary shell script, then deleting the previously created directory. Let’s take a look inside ocean_strike_v5.tar.gz.

The script of interest, ‘predator_v5_mimicry.sh’, is a shell script designed to perform the following actions:

- Checks if it is running insider a container, sandbox, WSL, or other virtualization environment using /proc/1/cgroup

- Adds an SSH key to /root/.ssh/authorized_keys to maintain persistent access to the compromised device

- Attempts to set vm.nr_hugepages=1280 – increasing performance of certain workloads, including crypto-mining

- Attempts to identify any other mining-related processes and kill them, including anything that mentions guard, miner, YDServi, xmrig, nanominer, kdevtmpfsi, gost, pcpcat, or proxy.sh

- Creates directory /dev/shm/.sys-cache and copies some of the above files into it – sys-compute is copied as kworker and made executable.

- A service is also installed named ‘System Monitoring Service’ to ensure it is always running.

- The malware then configures what it refers to as a Mimicry Proxy via the binary sys-net-d seen above – this is installed into /usr/bin/sys-net-d with a service setup named ‘Network Monitoring Service’.

- The malware then attempts to build something it refers to as a ‘Mimicry Aquarium’ using the contained docker file, spawning a container named ‘sys-aquarium-mimicry’ and mapping host port 2376 to container port 2375.

- The container appears intended to serve as a distraction for other attackers – it installs some common Linux utilities such as curl, wget, etc, creates fake artifacts like /root/proxy.sh, creates a cron job without actually enabling it to run proxy.sh every 30 minutes, then creates an empty file at /var/run/docker.sock

- The container itself is used to return a general HTTP 200 Status in response to any request to port 2375, the Docker Remote API.

- The attacker then uses iptables to redirect any inbound request destined for port 2375 to port 2376 – effectively capturing traffic destined for the Docker Remote API into the container, which then supplies a ‘fake’ response.

- This is done seemingly to prevent any additional tampering or exploitation from occurring that may interfere with their mining services.

- Their own IP address is excluded from this port mapping rule via iptables

- Finally, the attacker writes to a file called /dev/shm/.sys-cache/watchdog.sh a bash script that periodically wakes and checks 144.31.106[.]169 for a 200 OK response – if it is not received, it clears all iptables pre-routing and restarts the main docker service.

- This is to ensure access to the true Docker API port is available remotely again for a potential re-compromise

In total, the malware attempts to find and kill competitors, deploys persistence via system services, runs a Docker container to proxy traffic to the Remote Docker API port, deploys a crypto-miner onto the device, and implements a dead mans switch designed to clear the routing trap should the main IP address fail to respond.

After some research, I reached the following conclusions:

- sys-net-d appears to be a Sliver agent payload (tracks with Sliver C2 exposed on the IP address already).

- sys-compute is an XMRig mining binary, avoiding the overhead of having to download it dynamically on victim systems.

While digging into other directories, I found multiple older versions of their persistence and deployment scripts along with potential targets in the ‘ocean_strike.tar.gz’ file, as shown below:

The observed sys-net-d and sys-compute are the same Sliver payload and XMRig binary previously noted. There are variations of persistence scripts present, but all ‘deployer’ scripts are 0-byte files as of the time of this analysis. Sys-guard is a simple shell script that configures ocean-miner along with a decoy Docker container, another variation of Monero mining.

What’s more interesting is the files labelled ‘fortress_results’ – these appear to be scans of potential victim IP addresses – each victim had an exposed Remote Docker API port that the threat actor tested to determine the level of access they could achieve remotely, attempting to create a privileged container to facilitate mining operations.

Revisiting one of the earlier files, we identified another Monero miner staging script named ‘cuckoo_stager.sh’. This is similar to the ‘Ocean Strike’ payload but had slightly different checks for containers, competitors, deployment, persistence, and fail-over operations. A snippet is shown below.

In essence, this script attempted to:

- Identify if it’s in a container via the presence of ‘docker’ in /proc/1/cgroup and also checking for the presence of systemd

- Kill other competing mining processes

- Download xmrig and Yggdrasil payloads binaries from the main control address (144.31.106[.]169)

- Configure and launch xmrig for Monero mining

- Persist itself via cron jobs

- Hide initial access by deleting bash history and timestomping xmrig binary and configuration files to appear older than they are

- Delete the initial stager (/tmp/cuckoo_stager.sh)

- Then, optionally, starting Yggdrasil mesh network in an attempt to obfuscate traffic destinations and avoid initial detection at the network level

There was a final script named ‘dead_man_switch.sh’ that appears similar in nature to the fallback utilized in the original ‘Ocean Strike’ script in that it periodically checks if a specific heartbeat is being populated – if no heartbeat is detected after a 30 minute period, it assumes there is a catastrophic error with the script and attempts to re-expose the original Docker API ports instead of the mimicked port so that remote access can once again be gained by the actor.

Conclusions

This is an interesting malware variant that is explicitly targeting the Remote Docker API in an attempt to create high-privileged containers on exposed ports that are then leveraged to instantiate Monero-mining operations – this is not a new technique, but it is still interesting from a defensive perspective to study the source and implementation so that we can further harden relevant systems.

Once the malware is deployed, the Docker API port (2375) is then proxied to 2376 via a ‘decoy’ container and iptables, likely to ensure that other actors cannot launch competing resources – but in such a way that the C2 IP address can still reach the original port without interference.

Monero mining attacks are not new and neither is inhibiting further use of the Remote Docker API (https://www.akamai.com/blog/security-research/new-malware-targeting-docker-apis-akamai-hunt) – but the naming conventions and strategies used here seem to indicate this may be part of a more organized campaign.

Be on the lookout for the below indicators in your network.

Indicators of Compromise

- 144.31.106[.]169

- C2 Address

- 45g4uGaXz3PeaN94ns6CwXDa2oxNFKrYPAEjqZSjhem2RQju9JtuQgbSLrvq9pN35dUeUgS8996G7P9eZ9bX78m4CjwGeP3

- Monero Wallet

- cuckoo_stager.sh

- Payload Variation deploying mining, persistence, etc

- mimicry.sh

- Shell Script serving as Initial Access payload

- SHA256: 148b56141e04ff98c408c50320eb76398854f0a071c3276779b8d0db99332157

- predator_v5_mimicry.sh

- SHA256: dba481116b7c451bf018518c02431b3c342c92c047d77ceaacedcf6a44e376c4

- dead_man_switch.sh

- SHA256: 31a4271fc2c4ab2a7939358f98e7cb339115ede6180a500f7a441b468e09a8d6

- persist.sh

- SHA256: 5e37c6b3bf5192e334d6ff0c5995f73f60b27b18cbdcedcbcc579d32aeea2e7c

- persist_v4_proxy.sh

- SHA256: 8ceebbd3112d462ff6aa00b6e5330b75d29c872ee8dee86bbba968c88fea857a

- predator_v3_ocean.sh

- SHA256: a07a12709f8fef2b4667fcbd8947f670f2bfbf92751553184fb3ed8f93679537

- sys-compute

- XMRig Binary

- SHA256: 92dcc363ed05c5e4ae9008f7d0d41b1ad1ae9caead9d4f3598c566b185078b4b

- sys-net-d

- Sliver Payload

- SHA256: 448fbd7b3389fe2aa421de224d065cea7064de0869a036610e5363c931df5b7c

- sys-guard

- Shell Script configuring ocean-miner

- SHA256: 3aad38a1792dfeede3cb47181bd3570047e3c71b659ebdf811fc5e2bae0bc9d5

- /dev/shm/.sys/, /dev/shm/.sys-cache

- Directories created to host payloads/binaries/configs